Swiss AI risk assessment startup Calvin Risk has raised $1.5 million of pre-seed funding from VC investors btov Partners and Wingman Ventures.

Having spun off earlier this year from the Swiss institute ETH Zurich, Calvin Risk is working on a risk and auditing management toolkit to help AI developers overcome the "black box" problem of commercial algorithms lacking human accountability.

The datasets fed to AI are susceptible to human biases that cause inaccurate automated judgements. These pitfalls could lead to some hefty compensation pay outs, creating a market for auditing, risk quantification and evaluation metrics.

With its risk management system, Calvin Risk is targeting three leading users of AI - insurance, pharmaceuticals and tech. It is hoped these industries will be able to "continuously monitor" projects using Calvin's auditing tools, particularly when accounting for new features and their ethical and regulatory implications.

Controversy continues to stalk the intersect of ethics and AI. Last week saw critics slam OpenAI's introduction of a new GPT offshoot for automated chatbots over fears the public demo will permit cheating of academic essays, after a supposedly competent showing of its essay-writing abilities.

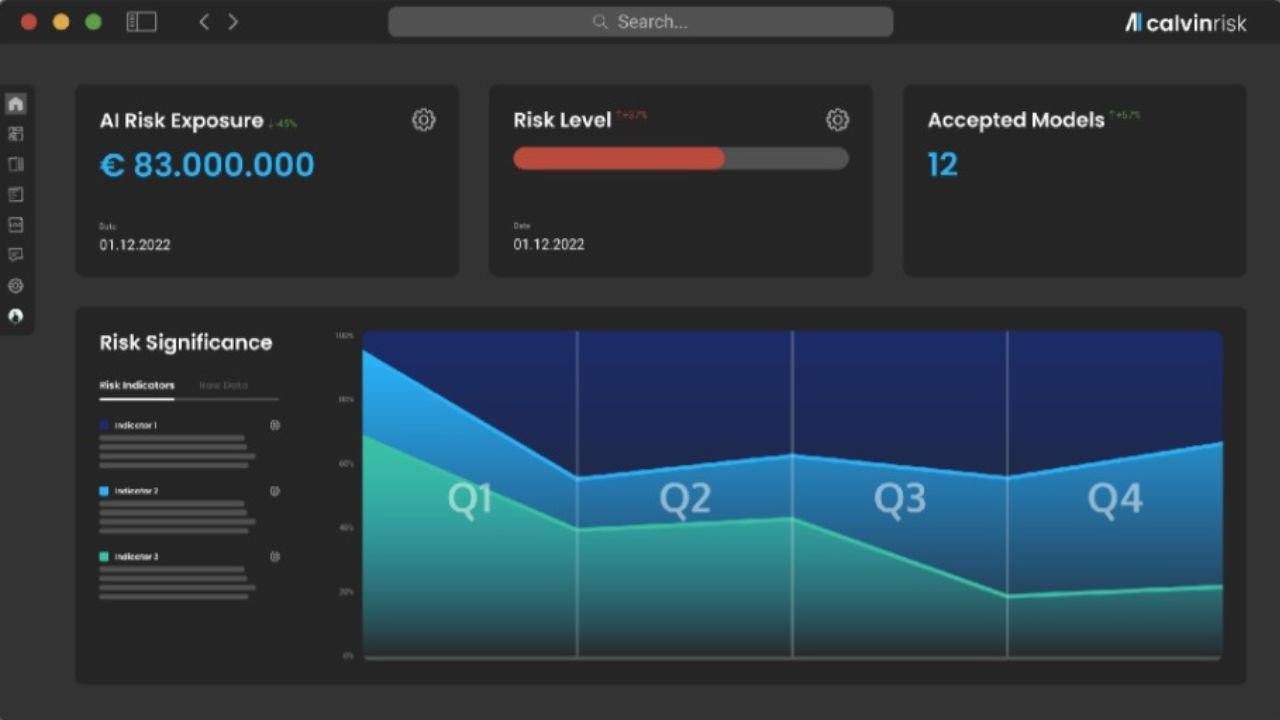

While Calvin Risk cannot prevent these pitfalls alone, it's promising to do much of the grunt work to flag up risks so action can be taken. Its vision of trustworthy AI relies on applying a robust criteria for judging its performance, fairness and explainability, and then soldering the framework down so that it's referred back to in every development process. On top of the framework is an overview that's designed to be understood by every stakeholder, from team leads to risk auditors.

The startup's chief technology officer, Syang Zhou, said: "Ensuring that the regulatory goals of a humanity-centric AI are harmonized with the need for innovation is a key part of Calvin Risk’s efforts.”

"Our platform enables the alignment between safer and higher-quality models, and maintaining the ethical efforts of humanity, while improving process efficiency. The results are trustworthy and effective evaluation processes."

Regulating AI is a global priority and one in which the EU has sought an early lead. With its forthcoming compliance law for AI development, the EU again aims to be a regulatory pioneer following its earlier championing of data privacy. But 2024 is the earliest Brussels expects the AI law to be actioned, so developers have time to drill down on compliance.

Calvin Risk adviser Tarek Besold, who heads strategic AI at Stuttgart-based digital transformation company DEKRA Digital, says EU efforts to build public trust in AI will rely on creating more visibility of its risks.

"Sustained innovation requires market acceptance of products – understanding risk helps consumers to become emancipated actors in the market, overcoming reservations and accepting new technologies. This then allows further innovation.”

Calvin Risk's software platform will ultimately aim to calculate the precise probability of ethical failures in AI, bringing a standard for what it sees as "humanity-centric" automation that's constantly tuned to society's expectations.

With this initial pre-seed raise, the startup plans to expand R&D headcount and prepare validating studies for the insurance, pharmaceuticals and tech outreach. According to its CEO Julian Riebartsch it's developers of established AI platforms who will likely benefit most.

"An organization profits most from our platform if their AI efforts are fairly mature," said Reibartsch, "An example would be a company that has already developed and deployed some algorithms, but wants to improve its quality and standardization, and professionalize the roll-out process.

"Furthermore, the more ubiquitous AI becomes at larger organizations, the greater the benefit of our product”

Would you like to write the first comment?

Login to post comments